The Halting Problem: Why Perfect Predictability is Mathematically Impossible

Every executive wants a crystal ball. We want our QA teams to guarantee that the new software release is 100% bug-free. We want our project managers to tell us the exact date a complex migration will finish. We want to know, with absolute certainty, what a system will do before we turn it on.

We treat this lack of predictability as a failure of management or engineering. We assume that if we just wrote better tests, hired smarter developers, or implemented a stricter Agile framework, we could finally achieve perfect foresight.

We are wrong. The inability to perfectly predict complex systems is not a management failure. It is a fundamental law of mathematics, proven in 1936 by a 23-year-old genius named Alan Turing.

It is known as The Halting Problem, and understanding it is the key to shifting your organization from a fragile culture of prevention to a robust culture of resilience.

The Dream of the Universal Checker

To understand Turing's breakthrough, we must briefly return to the ghost of Bertrand Russell. Russell wanted to prove that all mathematics was perfectly logical. Following Russell, another legendary mathematician, David Hilbert, posed a challenge to the world: Can we create a universal algorithm that can read any mathematical statement and definitively say if it is True or False?

Alan Turing answered this with a thought experiment about computation.

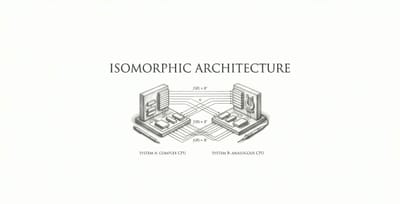

Turing asked: Can we write a "Master Program" (let's call it the Checker) that reads the code of any other program and perfectly predicts what it will do? Specifically, can the Checker look at a program and predict if it will eventually finish its task and stop (Halt), or if it will get stuck in an infinite loop and crash (Loop)?

For the software industry, this Checker would be the Holy Grail. You would run all your code through the Checker before deployment, and it would warn you, "Do not deploy; this code will eventually freeze."

The Paradox That Broke the Machine

Turing proved that this Master Checker cannot exist. Not just that it is hard to build, but that its existence violates the laws of logic.

Here is the elegant, devastating proof: Imagine you actually built this perfect Checker. It works flawlessly. Now, Turing says, I will write a malicious new program called the Paradox Program. The Paradox Program asks the Checker to analyze it (the Paradox Program itself) and then does the exact opposite of whatever the Checker predicts.

- If the Checker predicts the Paradox Program will Halt, the Paradox Program intentionally goes into an infinite Loop.

- If the Checker predicts the Paradox Program will Loop, the Paradox Program immediately Halts.

The logic instantly collapses. The Checker cannot predict what the Paradox Program will do without contradicting itself. It is the Barber Paradox (from our Russell arc) translated into silicon.

Turing proved that Undecidability is baked into the fabric of computation. You cannot write a program that perfectly analyzes the behavior of all other programs. The only way to know for sure what a complex piece of code will do is to run it and watch what happens.

The Strategic Reality: Stop Chasing Ghosts

What does a 1936 mathematical proof have to do with the Chief Wise Officer in 2026? It means that your obsession with perfect predictability is a fool's errand. The Halting Problem proves that as systems become sufficiently complex (whether they are software architectures, supply chains, or organizational charts), their future states become mathematically undecidable.

Here is how the Wise Officer adapts to a world where the Master Checker cannot exist.

1. Shift from MTBF to MTTR Legacy IT departments optimize for MTBF (Mean Time Between Failures). They spend a lot on massive QA environments, endless code reviews, and change approval boards trying to predict and prevent every possible crash. They are trying to build Turing's impossible Checker. Modern engineering organizations optimize for MTTR (Mean Time To Recovery). Because they know they cannot predict every failure, they invest in the ability to fix failures instantly. They use feature flags, automated rollbacks, and microservices. Strategy Rule: Do not spend 90% of your budget trying to prevent the unpredictable. Spend it on surviving the inevitable.

2. The End of the "Perfect" Roadmap Gantt charts and waterfall roadmaps operate on the delusion that we can perfectly predict the halting time of human knowledge work. When a development team misses a deadline, leadership often demands more detailed estimates and more granular tracking for the next sprint. This is fighting mathematics. You are demanding a level of determinism that does not exist in complex systems. Strategy Rule: Shift from date-driven roadmaps to goal-driven roadmaps. Measure progress by working software delivered, not by adherence to a fictional timeline.

3. Embrace Chaos Engineering If you cannot analytically prove that your system is stable from the outside, you must test it dynamically from the inside. This is the philosophy behind Chaos Engineering (pioneered by Netflix's "Chaos Monkey"). Instead of trying to write a perfect testing suite to predict downtime, they intentionally inject failures (killing servers, severing network connections) into the live production environment to ensure the system heals itself automatically.

Conclusion: The Humility of Turing

Alan Turing did not destroy computer science by proving the Halting Problem; he liberated it. By proving what computers cannot do, he freed us to focus on what they can do.

As a leader, you must do the same for your organization. When you stop demanding perfect predictability, you remove the culture of fear and blame that surrounds failure. You stop asking your teams to be fortune tellers and start empowering them to be resilient engineers.

The next time a major system crashes in a way no one predicted, do not demand to know why the testing team didn't catch it. Remember Alan Turing. Some code simply cannot be solved until it runs. Build your company to survive the run.

No spam, no sharing to third party. Only you and me.

Member discussion