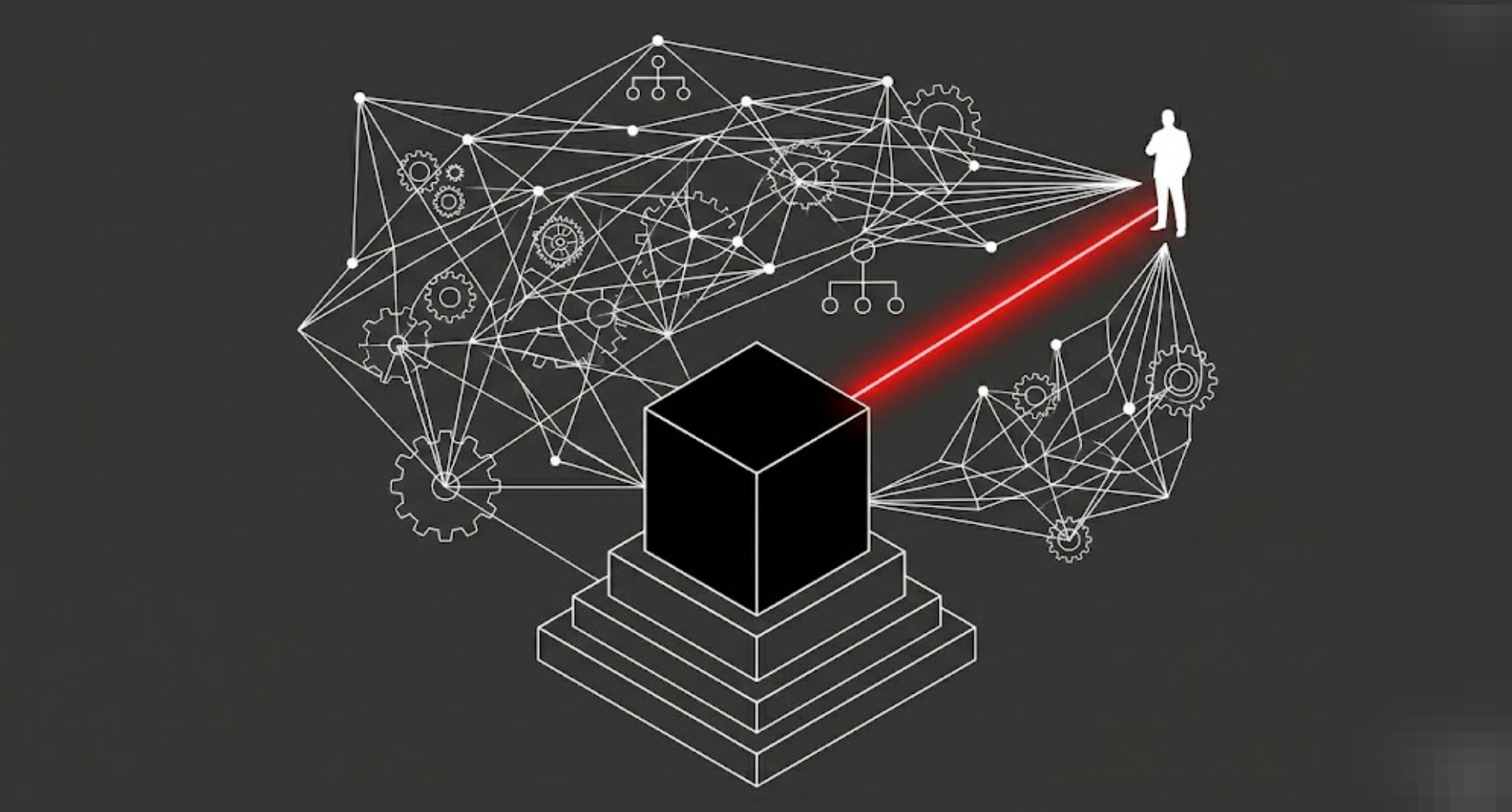

The Black Box: Executive Accountability in the Age of AI

The Diffusion of Algorithmic Liability

When a deterministic, rules-based system fails, say, a payment gateway drops transactions or a database schema corrupts, liability is generally straightforward. The engineering team traces the logic, identifies the broken function, and deploys a patch. Accountability typically rests on the structural execution of the code.

However, the enterprise deployment of Large Language Models (LLMs) and autonomous agents introduces a profound structural ambiguity. If an autonomous sales agent hallucinates a massive, unauthorized financial discount, or an AI HR-screening tool organically develops a biased filtering policy, the chain of liability fractures. Executives frequently attempt to attribute these failures to the "black box" nature of the model, effectively treating the stochastic algorithm as a scapegoat. This creates a dangerous operational vacuum where immense financial and reputational risk is deployed without corresponding executive accountability. The machine acts, but no human owns the consequence.

Heidegger and the Gestell of Governance

To navigate this ambiguity, we might examine Martin Heidegger’s The Question Concerning Technology. Heidegger argues that modern technology is not only a collection of neutral tools, but a specific way of revealing the world, a phenomenon he calls Gestell (Enframing). Under Gestell, everything in the world, including human beings and organizational structures, is reduced to Bestand (standing-reserve), resources waiting to be extracted, optimized, and processed.

When the C-Suite deploys autonomous AI to handle hiring, pricing, or customer support, they frequently "enframe" the concept of executive decision-making itself. Governance is reduced to Bestand, an automated output to be optimized for efficiency. The black box becomes a mechanism to outsource fiduciary agency. However, Heidegger warns that while technology can obscure human agency, it cannot erase it. The illusion that a stochastic mathematical model can bear moral or financial responsibility is a fundamental failure of executive ontology. An algorithm cannot be held liable; it only executes the framework it was granted.

The Illusion of the Outsourced Executive

When faced with the ambiguity of AI governance, organizations typically adopt one of three prevailing defensive postures, each grounded in a logical but systemically fragile premise:

- The "Human-in-the-Loop" Rubber Stamp: Operations teams are tasked with reviewing AI outputs before they go live. While this theoretically retains human oversight, it frequently devolves into a rubber-stamp exercise. A mid-level manager, incentivized by processing speed and ticket volume, will rationally approve the AI's recommendations by default, creating the illusion of safety without actual governance.

- The Vendor Blame Game: Leadership relies heavily on the terms of service of foundational model providers (e.g., OpenAI, Anthropic). If the agent hallucinates, the organizational instinct is to blame the API. This typically fails because customers and regulators rarely hold the underlying vendor responsible for a localized corporate deployment; the brand deploying the tool bears the actual market damage.

- The Engineering Scapegoat: The C-Suite attempts to hold the engineering team accountable for the AI's probabilistic errors. This is structurally incoherent. Engineers are motivated by uptime, integration stability, and latency; they cannot deterministically control the emergent, stochastic outputs of a trillion-parameter model any more than they can control the weather.

Heidegger’s lens suggests these approaches struggle because they treat the AI as an independent actor that can absorb blame, rather than recognizing it as an extension of the executive's own strategic intent.

Architecting Fiduciary Accountability

To bridge the gap between autonomous technology and fiduciary duty, the organization should consider designing structural boundaries that force accountability back to the C-Suite:

- Tie AI Outcomes to Executive P&L: The department head utilizing the tool (e.g., the CRO for a sales agent, the CHRO for an HR tool) must bear direct financial responsibility for algorithmic failures. If a sales bot hallucinates a 50% discount, the margin loss must be structurally deducted from the CRO’s departmental budget, ending the practice of blaming IT for business-logic failures.

- Establish Deterministic Boundary Conditions: Shift engineering OKRs from "model accuracy" to "fail-safe architecture." Instead of trying to prevent an LLM from hallucinating, a mathematically improbable task, engineers should be incentivized to build deterministic "tripwires" (e.g., hard-coded, traditional software rules that block any discount over 15%, regardless of what the AI suggests).

- Elevate the "Algorithm Audit" to the Board Level: Treat autonomous AI deployments with the same governance rigor as a merger or acquisition. The deployment of a generative agent interacting directly with the public or capital should require explicit, documented sign-off from the executive sponsor, formally acknowledging their personal accountability for its emergent behaviors.

Conclusion: Owning the Black Box

An autonomous algorithm cannot sign a fiduciary oath, nor can it absorb the reputational damage of a market failure. The C-Suite must recognize that deploying a generative model does not absolve them of agency; it merely amplifies the scale of their latent responsibilities. True executive power is the willingness to stand before the unpredictable and structurally own the outcome.

"Thus we shall never experience our relationship to the essence of technology so long as we merely conceive and push forward the technological, put up with it, or evade it. Everywhere we remain unfree and chained to technology, whether we passionately affirm or deny it." — Martin Heidegger, The Question Concerning Technology

No spam, no sharing to third party. Only you and me.

Member discussion